Universität Konstanz Sommersemester 2015 Fachbereich Mathematik und Statistik

Prof. Dr. Stefan Volkwein Sabrina Rogg

Optimierung

http://www.math.uni-konstanz.de/numerik/personen/rogg/de/teaching/

Program 2 (6 Points)

Submission by E-Mail: 2015/06/08, 10:00 h

Optimization with boundary constraints Implementation of the Gradient Projection Algorithm

So far we looked for (local) minimizer x ∗ ∈ R n of a sufficiently smooth and real valued function f : R n → R in an open set Ω ⊆ R n :

x ∗ = argmin

x∈Ω

f (x).

The first order necessary optimality condition is ∇f(x ∗ ) = 0.

If Ω is given as the closed and bounded domain Ω =

n

Y

i=1

[a i , b i ] = {x ∈ R n | ∀i = 1, ..., n : a i ≤ x i ≤ b i , a i , b i ∈ R , a i < b i },

the above condition must be changed to admit the possibility that a (local) minimizer is located on the boundary of the domain. In Exercise 11 we prove the following modified first order condition:

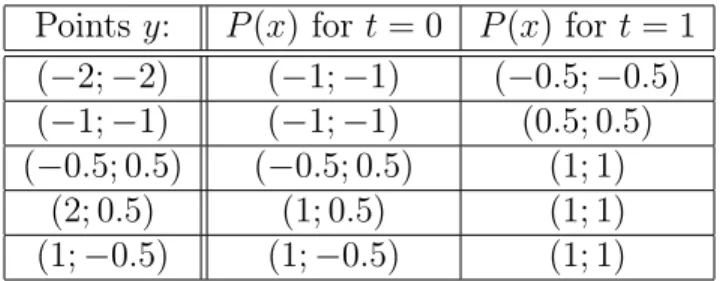

∇f (x ∗ ) > (x − x ∗ ) ≥ 0 for all x ∈ Ω. (1) The canonical projection of x ∈ R n on the closed set Ω is given by P : R n → Ω,

P (x)

i :=

a i if x i ≤ a i x i if x i ∈ (a i , b i ) b i if x i ≥ b i

.

It can be shown:

x ∗ satisfies condition (1) ⇔ x ∗ = P (x ∗ − λ∇f (x ∗ )) for all λ ≥ 0

The gradient projection algorithm (using the normalized gradient as descent direction) works as follows: Given a current iterate x k . Let d k := − k∇f(x ∇f(x

k)

k