SFB 823

A test for separability in covariance operators of random surfaces

Discussion Paper

Pramita Bagchi, Holger DetteNr. 19/2017

A test for separability in covariance operators of random surfaces

Pramita Bagchi, Holger Dette Ruhr-Universität Bochum

Fakultät für Mathematik 44780 Bochum

Germany October 21, 2017

Abstract

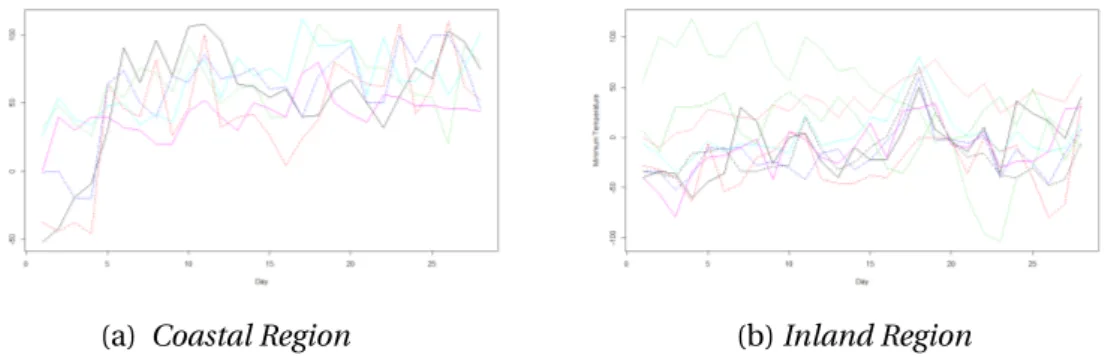

The assumption of separability is a simplifying and very popular assumption in the analysis of spatio-temporal or hypersurface data structures. It is often made in situations where the covariance structure cannot be easily estimated, for example because of a small sample size or because of computational storage problems. In this paper we propose a new and very simple test to validate this assumption. Our approach is based on a measure of separability which is zero in the case of sepa- rability and positive otherwise. The measure can be estimated without calculating the full non-separable covariance operator. We prove asymptotic normality of the corresponding statistic with a limiting variance, which can easily be estimated from the available data. As a consequence quantiles of the standard normal distribution can be used to obtain critical values and the new test of separability is very easy to implement. In particular, our approach does neither require projections on sub- spaces generated by the eigenfunctions of the covariance operator, nor resampling procedures to obtain critical values nor distributional assumptions as recently used by Aston et al. (2017) and Constantinou et al. (2017) to construct tests for separabil- ity. We investigate the finite sample performance by means of a simulation study and also provide a comparison with the currently available methodology. Finally, the new procedure is illustrated analyzing wind speed and temperature data.

Keywords: functional data, minimum distance, separability, space-time processes, sur- face data structures

AMS Subject classification: 62G10, 62G20

1 Introduction

Data, which is functionalandmultidimensional is usually called surface data and arises in areas such as medical imaging [see Skup (2010); Worsley et al. (1996)], spectrograms derived from audio signals or geolocalized data [see Bar-Hen et al. (2008); Rabiner and Schafer (1978) ]. In many of these ultra high-dimensional problems a completely non- parametric estimation of the covariance operator is not possible as the number of avail- able observations is small compared to the dimension. A common approach to obtain reasonable estimates in this context are structural assumptions on the covariance of the underlying process, and in recent years the assumption of separability has become very popular, for example in the analysis of geostatistical space-time models [see Genton (2007); Gneiting et al. (2007), among others]. Roughly speaking, this assumption allows to write the covariance

c(s,t,s0,t0)=E[X(s,t)X(s0,t0)]

of a (real valued) space-time process {X(s,t)}(s,t)∈S×T as a product of the space and time covariance function, that is

c(s,t,s0,t0)=c1(s,s0)c2(t,t0). (1.1) It was pointed out by many authors that the assumption of separability yields a substan- tial simplification of the estimation problem and thus reduces computational costs in the estimation of the covariance in high dimensional problems [see for example Huizenga et al. (2002); Rougier (2017)]. Despite of its importance, there exist only a few tools to validate the assumption of separability for surface data.

Many authors developed tests for spatio-temporal data. For example, Fuentes (2006) proposed a test based on the spectral representation, and Lu and Zimmerman (2005);

Mitchell et al. (2005, 2006) investigated likelihood ratio tests under the assumption of a normal distribution. Recently, Constantinou et al. (2017) derived the joint distribution of the three statistics appearing in the likelihood ratio test and used this result to derive the asymptotic distribution of the (log) likelihood ratio. These authors also proposed alternative tests which are based on distances between an estimator of the covariance under the assumption of separability and an estimator which does not use this assump- tion.

Aston et al. (2017) considered the problem of testing for separability in the context of hypersurface data. These authors pointed out that many available methods require the estimation of the full multidimensional covariance structure, which can become infea- sible for high dimensional data. In order to address this issue they developed a bootstrap test for applications, where replicates from the underlying random process are available.

To avoid estimation and storage of the full covariance finite-dimensional projections of

the difference between the covariance operator and a nonparametric separable approx- imation are proposed. In particular they suggest to project onto subspaces generated by the eigenfunctions of the covariance operator estimated under the assumption of separability. However, as pointed in the same references the choice of the number of eigenfunctions onto which one should project is not trivial and the test might be sensi- tive with respect to this choice.

In this paper we present an alternative and very simple test for the hypothesis of separability in hypersurface data. We consider a similar setup as in Aston et al. (2017) and proceed in two steps. First we derive anexplicit expression for the minimal dis- tance between the covariance operator and its approximation by a separable covariance operator, where the minimum is taken with respect to the second factor of the tensor product. It turns out that this minimum vanishes if and only if the covariance operator is separable. Secondly, we directly estimate the minimal distance (and not the covari- ance operator itself ) from the available data. As a consequence the calculation of the test statistic does neither use an estimate of the full non-separable covariance operator nor requires the specification of subspaces used for a projection. In the main result of this paper we derive the asymptotic distribution of the test statistic, which is normal (after appropriate standardization) under the null hypothesisandalternative. The lim- iting variance under the null hypothesis can easily be estimated and as consequence we obtain a very simple test for the hypothesis of separability, which only requires the quan- tiles of the normal distribution. Moreover, in contrast to the work of Aston et al. (2017), the test proposed here does not require resampling and - from a theoretical perspec- tive - the limiting theorems are valid under more general and easier to verify moment assumptions.

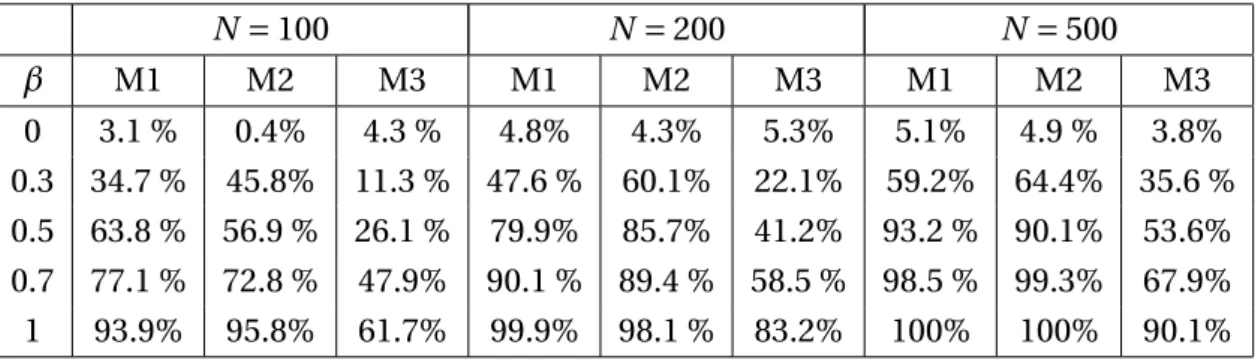

In Section 2 we review some basic terminology and minimize the distance between the covariance operator and separable covariance operators with respect to the second factor of the tensor product. This minimum distance could also be interpreted as a measure of deviation from separability (it is zero in the case of separability and posi- tive otherwise). In Section 3 we propose an estimator of the minimum distance and prove asymptotic normality of a standardised version of this statistic. We also provide a simple estimate of the limiting variance under the null hypothesis and prove its con- sistency. Section 4 is devoted to an investigation of the finite sample properties of the new test and a comparison with two alternative tests for this problem, which have re- cently been proposed by Aston et al. (2017) and Constantinou et al. (2017). In particular we demonstrate that - despite of its simplicity - the new procedure has very competitive properties compared to the currently available methodology. Finally, some technical details are deferred to the Appendix A.

2 Hilbert spaces and a measure of separability

We begin introducing some basic facts about Hilbert spaces, Hilbert-Schmidt operators and tensor products. For more details we refer to the monographs of Weidmann (1980), Dunford and Schwartz (1988) or Gohberg et al. (1990). LetHbe a real separable Hilbert space with inner-product〈·,·〉and normk · k. The space of bounded linear operators on His denoted byS∞(H) with operator norm

T∞:= sup

kfk≤1

kT fk.

A bounded linear operatorT is said to be compact if it can be written as T=X

j≥1

sj(T)〈ej,·〉fj,

where {ej : j ≥1} and {fj : j ≥1} are orthonormal sets of H, {sj(T) : j ≥1} are the sin- gular values ofT and the series converges in the operator norm. We say that a compact operatorT belongs to the Schatten class of orderp≥1 and writeT ∈Sp(H) if

Tp=

³X

j≥1

sj(A)´1/p

< ∞.

The Schatten class of orderp≥1 is a Banach space with norm.pand with the property thatSp(H)⊂Sq(H) forp<q. In particular we are interested in Schatten classes of order p=1 and 2. A compact operatorT is called Hilbert-Schmidt operator ifT ∈S2(H) and trace class ifT ∈S1(H). The space of Hilbert-Schmidt operatorsS2(H) is also a Hilbert space with the Hilbert-Schmidt inner product given by

〈A,B〉H S=X

j≥1

〈Aej,B ej〉

for each A,B∈S2(H), where {ej :j ≥1} is an orthonormal basis (the inner product does not depend on the choice of the basis).

For two real separable Hilbert spacesH1andH2, the tensor product ofH1andH2, denoted as H :=H1⊗H2, is the Hilbert space obtained by the completion of all finite

sums N

X

i,j=1

ui⊗vj , ui∈H1, vj∈H2,

under the inner product 〈u⊗v,w⊗z〉 = 〈u,w〉〈v,z〉, foru,w ∈H1 and v,z ∈H2. For C1∈S∞(H1) andC2∈S∞(H2), the tensor productC1⊗˜C2is defined as the unique linear operator onH1⊗H2satisfying

(C1⊗˜C2)(u⊗v)=C1u⊗C2v, u∈H1,v∈H2.

In factC1⊗˜C2∈S∞(H) withC1⊗˜C2∞= C1∞C2∞. Moreover, ifC1∈Sp(H1) and C2∈Sp(H2), forp ≥1, thenC1⊗C˜ 2∈Sp(H) withC1⊗C˜ 2p = C1pC2p. For more details we refer to Chapter 8 of Weidmann (1980). In the sequel we denote the Hilbert- Schmidt inner product on S2(H) with H =H1⊗H2 as〈·,·〉H S and that of S2(H1) and S2(H2) as〈·,·〉S2(H1)and〈·,·〉S2(H2)respectively.

2.1 Measuring separability

We consider random elementsX in the Hilbert spaceHwithEkXk4< ∞. (See Chapter 7 from Hsing and Eubank (2015)) Then the covariance operator ofX is defined asC := E[(X−EX)⊗o(X −EX)], where forf,g ∈Hthe operator f ⊗og :H→His defined by

(f ⊗og)h= 〈h,g〉f for allh∈H.

Under the assumptionEkXk4< ∞we haveC∈S2(H). We also assumeC26=0, which essentially means the random variableX is not degenerate. To test separability we con- sider the hypothesis

H0:C=C1⊗C2for someC1∈S2(H1) andC2∈S2(H2). (2.1) Our approach is based on a approximation of the operatorC by a separable operator C1⊗˜C2with respect to the norm · 2. Ideally, we are looking for

D:=infn

C−C1⊗C˜ 222

¯

¯

¯C1∈S2(H1),C2∈S2(H2)o

, (2.2)

such that the hypothesis of separability in (2.1) can be rewritten in terms of the distance D, that is

H0:D=0 versus H1:D>0 . (2.3) However, it turns out that it is difficult to expressDexplicitly in terms of the covariance operatorC. For this reason we proceed in a slightly different way in two steps. First we fixC1and determine

D(C1) :=infn

C−C1⊗C˜ 222

¯

¯

¯C2∈S2(H2)o

, (2.4)

that is we are minimizingC−C1⊗˜C222with respect to second factorC2of the tensor product. In particular we will show that the infimum is in fact a minimum and derive an explicit expression for D(C1) and its minimizer. Next we shows that the resulting minimum,D(C1) vanishes if and only if the hypothesis of separability holds.

For this purpose we have to introduce additional notation and have to prove several auxiliary results. The main statement is given in Theorem 2.1 (whose formulation also

requires the new notation). First, consider the bounded linear operator T1 : S2(H)× S2(H1)7→S2(H2) defined by

T1(A⊗˜B,C1)= 〈A,C1〉S2(H1)B (2.5) for allC1∈S2(H1). Similarly, letT2 :S2(H)×S2(H2)→S2(H1) be the bounded linear operator defined by

T2(A⊗˜B,C2)= 〈B,C2〉S2(H2)A (2.6) for allC2∈S2(H2).

Proposition 2.1. The operators T1and T2are well-defined, bi-linear and continuous with

〈B,T1(C,C1)〉S2(H2)= 〈C,C1⊗B˜ 〉H S, (2.7)

〈A,T2(C,C2)〉S2(H1)= 〈C,A⊗˜C2〉H S. (2.8) for all A,C1∈S2(H1), B,C2∈S2(H2) and C ∈S2(H).

Proof. By Lemma A.1 in Appendix A the space D0:=

nXn

i=1

Ai⊗˜Bi:Ai∈S2(H1),Bi∈S2(H2),n∈No

(2.9) is a dense subset ofS2(H1⊗H2) (note that a similar result for the spaceS1(H1⊗H2) has been established in Lemma 1.6 of the supplementary material in Aston et al. (2017)). For allB∈S2(H2),E∈D0andC1∈S2(H1), we have

〈B,T1(E,C1)〉S2(H2)= D

B, Xn

i=1

〈Ai,C1〉S2(H1)Bi

E

S2(H2)= Xn

i=1

〈Ai,C1〉S2(H1)〈B,Bi〉S2(H2)

= DXn

i=1

Ai⊗B˜ i,C1⊗B˜ E

H S= 〈E,C1⊗B˜ 〉H S. (2.10) Using the fact that

T1(E,C1)2≤ T1(E,C1)1=sup©

〈B,T1(E,C1)〉S2(H):B ∈S2(H2),B∞≤1ª

, (2.11) (2.10) and the Cauchy Schwarz inequality it follows that

T1(E,C1)2≤ C12E2. (2.12) Therefore, for eachC1∈S2(H1), we can extendT1(.,C1) continuously onS2(H).

Furthermore as (2.7) holds for allC ∈D0and the mapsC 7→ 〈B,T1(C,C1)〉S2(H1)and C 7→ 〈C,C1⊗˜B〉H S are continuous for allB ∈S2(H2) andC1∈S2(H1), we can conclude that (2.7) holds for allC∈S2(H).

Corollary 2.1. For all C∈S2(H), C1∈S2(H1)and C2∈S2(H2)we have

T1(C,C1)2≤ C2C12 and T2(C,C2)2≤ C2C22.

Proposition 2.2. For any C∈S2(H)and C1∈S2(H1), we have

〈C,C1⊗˜T1(C,C1)〉H S= T1(C,C1)22. Proof. Recall the definition of the setD0in (2.9) and letC =PN

n=1An⊗˜Bn∈D0, where An∈S2(H1),Bn∈S2(H2). We write

〈C,C1⊗T˜ 1(C,C1)〉H S =

* C,C1⊗˜

N

X

n=1

〈An,C1〉S2(H1)Bn +

H S

=

N

X

n=1

〈An,C1〉S2(H1)〈C,C1⊗˜Bn〉H S

=

N

X

n=1 N

X

m=1

〈An,C1〉S2(H1)〈Am,C1〉S2(H1)〈Bm,Bn〉S2(H2). On the other hand,

〈T1(C,C1),T1(C,C1)〉S2(H2)=

* N

X

n=1

〈An,C1〉S2(H1)Bn,

N

X

m=1

〈Am,C1〉S2(H1)Bm +

S2(H2)

=

N

X

n=1 N

X

m=1

〈An,C1〉S2(H1)〈Am,C1〉S2(H1)〈Bm,Bn〉S2(H2). Therefore, for allC1∈S2(H1), the functionsf,g:S2(H)7→Rdefined by

f(C) := 〈C,C1⊗T˜ 1(C,C1)〉H S and g(C) := T1(C,C1)22

are continuous and coincide on the dense subset D0ofS2(H). So f(C)=g(C) for all C∈S2(H) and hence the result follows.

Theorem 2.1. For each C∈S2(H)and C1∈S2(H1)the distance

D(C1,C2)= C−C1⊗C˜ 22 (2.13) is minimized at

Ce2=T1(C,C1)

C122 . (2.14)

Moreover, for C1∈S2(H1)the minimum distance in(2.13)is given by D(C1)= C22−T1(C,C1)22

C122 . (2.15)

In particular D(C1)is zero if and only if C=C1⊗˜C2for some C2∈S2(H2).

Proof. We write

C−C1⊗˜C222=C−C1⊗˜Ce222+ C1⊗˜Ce2−C1⊗˜C222 +2〈C−C1⊗˜Ce2,C1⊗˜Ce2−C1⊗˜C2〉H S. For the last term we obtain from (2.14)

〈C−C1⊗˜Ce2,C1⊗˜Ce2−C1⊗˜C2〉H S=〈C,C1⊗˜Ce2〉H S− C1⊗˜Ce222

− 〈C,C1⊗C˜ 2〉H S+ 〈C1⊗˜Ce2,C1⊗C˜ 2〉H S

= 1

C122〈C,C1⊗T˜ 1(C,C1)〉H S−T1(C,C1)22

C122

− 〈C,C1⊗˜C2〉H S+ 〈C2,T1(C,C1)〉H S, which is zero by (2.7) and Proposition 2.2. Therefore for allC2∈S2(H2) we have

C−C1⊗˜C222≥ C−C1⊗˜Ce222 which proves the first assertion of Theorem 2.1.

For a proof of the representation (2.15) we substitute the operatorCe2defined in (2.14) forC2in the expression ofD(C1,C2) and obtain

D(C1)=D(C1,Ce2)= C−C1⊗˜Ce222= 〈C−C1⊗˜Ce2,C−C1⊗˜Ce2〉H S

=C22+ C1⊗˜Ce222−2〈C,C1⊗˜Ce2〉H S

=C22+T1(C,C1)22

C122 − 2

C122〈C,C1⊗˜T1(C,C1)〉H S. Then the second assertion follows from Proposition 2.2.

Now assume thatC=C1⊗˜C2for someC2∈S(H2), then (2.5) implies

T1(C,C1)22=(〈C1,C1〉S2(H1))2C222= C142C222

and therefore D(C1)=0. Conversely, if D(C1)=0, we haveC =C1⊗˜Ce2, with Ce22≤

C2by Corollary 2.1.

2.2 Hilbert-Schmidt integral operators

An important case for applications consists is the setH :=L2(S×T,R) of all square in- tegrable functions defined on S×T, whereS ⊂Rp, T ⊂Rq are bounded measurable sets. If the covariance operatorC of the random variable X is a Hilbert-Schmidt op- erator it follows from Theorem 6.11 in Weidmann (1980) that there exists a kernelc ∈ L2¡

(S×T)×(S×T),R¢

such thatCcan be characterized as an integral operator, i.e.

C f(s,t)= Z

S

Z

T

c(s,t,s0,t0)f(s0,t0)d s0d t0, f ∈L2(S×T,R),

almost everywhere onS×T. Moreover the kernel is given by the covariance kernel of X, that isc(s,t,s0,t0)=Cov£

X(s,t),X(s0,t0)¤

, and the spaceH can be identified with the tensor product ofH1=L2(S,R) andH2=L2(T,R).

Similarly, ifC1andC2are Hilbert-Schmidt operators onL2(S,R) andL2(T,R) respec- tively there exists symmetric kernelsc1∈L2(H1×H1,R) andc2∈L2(H2×H2,R) such that

C1f(s) = Z

S

c1(s,s0)f(s0)d s0, f ∈H1 C2g(t) =

Z

T

c2(t,t0)g(t0)d t0, g ∈H2

almost everywhere onSandT, respectively. The following result shows that in this case the operatorsT1andT2defined by (2.5) and (2.6), respectively, are also Hilbert-Schmidt (or equivalently integral) operators.

Proposition 2.3. If C and C1are integral operators with kernels c∈L2¡

(S×T)×(S×T),R¢ and c1∈L2(S×S,R), then T1(C,C1)is a an integral operator with kernel given by

k(t,t0)= Z

S

Z

S

c(s,t,s0t0)c1(s,s0)d sd s0. (2.16) An analog result is true for the operator T2.

Proof. By Lemma A.2 in Appendix A for a given²>0 there exists an integral operatorC0 with kernelc0such that

C−C02< ² 2C12

and kc−c0k∞<²/2, whereC0=PN

n=1An⊗˜Bn, andAn andBnare finite rank operators with continuous ker- nelsanandbn. Note thatPN

n=1anbnis the kernel of the operatorC0. LetKbe the integral operator with the kernel defined in (2.16) andK0be the integral operator with kernel

k0(t,t0) := Z

S

Z

S

c0(s,t,s0t0)c1(s,s0)d sd s0, then (note thatK0is a Hilbert-Schmidt operator)

T1(C,C1)−K2≤T1(C,C1)−T1(C0,C1)2+ T1(C0,C1)−K02+ K0−K2. (2.17) By (2.12) the first term is bounded byC12C−C12<²/2. To handle the second term note that for any f ∈H2,

T1(C0,C1)f(t)=T1 Ã N

X

n=1

An⊗˜Bn,C1

! f(t)=

N

X

n=1

〈An,C1〉S2(H1)Bnf(t)

=

N

X

n=1

Z

T

Z

S

Z

S

an(s,s0)c1(s,s0)bn(t,t0)f(t0)d sd s0d t0